The Correct Way to Measure Inference Time of Deep Neural Networks | by Amnon Geifman | Towards Data Science

Object detection:Time for processing a batch of images at once is almost equal to processing them sequentially in order · Issue #4266 · tensorflow/models · GitHub

![PP-YOLO Object Detection Algorithm: Why It's Faster than YOLOv4 [2021 UPDATED] - Appsilon | Enterprise R Shiny Dashboards PP-YOLO Object Detection Algorithm: Why It's Faster than YOLOv4 [2021 UPDATED] - Appsilon | Enterprise R Shiny Dashboards](https://appsilon.com/wp-content/uploads/2020/09/pp-yolo-frameratevsmethod.png)

PP-YOLO Object Detection Algorithm: Why It's Faster than YOLOv4 [2021 UPDATED] - Appsilon | Enterprise R Shiny Dashboards

Electronics | Free Full-Text | On Inferring Intentions in Shared Tasks for Industrial Collaborative Robots | HTML

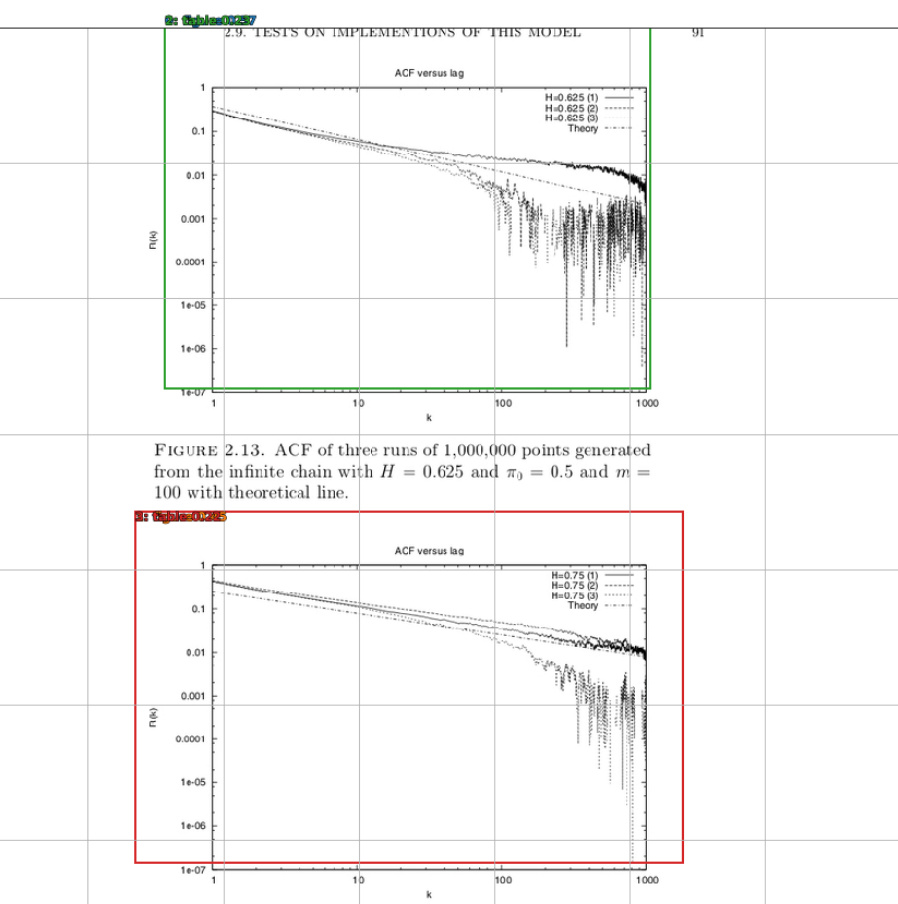

Amazon.com: Time Series: Modeling, Computation, and Inference, Second Edition (Chapman & Hall/CRC Texts in Statistical Science): 9781498747028: Prado, Raquel, Ferreira, Marco A. R., West, Mike: Books

How Acxiom reduced their model inference time from days to hours with Spark on Amazon EMR | AWS for Industries

Speed-up InceptionV3 inference time up to 18x using Intel Core processor | by Fernando Rodrigues Junior | Medium

FPN rpn inference time is faster than faster RCNN? · Issue #336 · facebookresearch/Detectron · GitHub

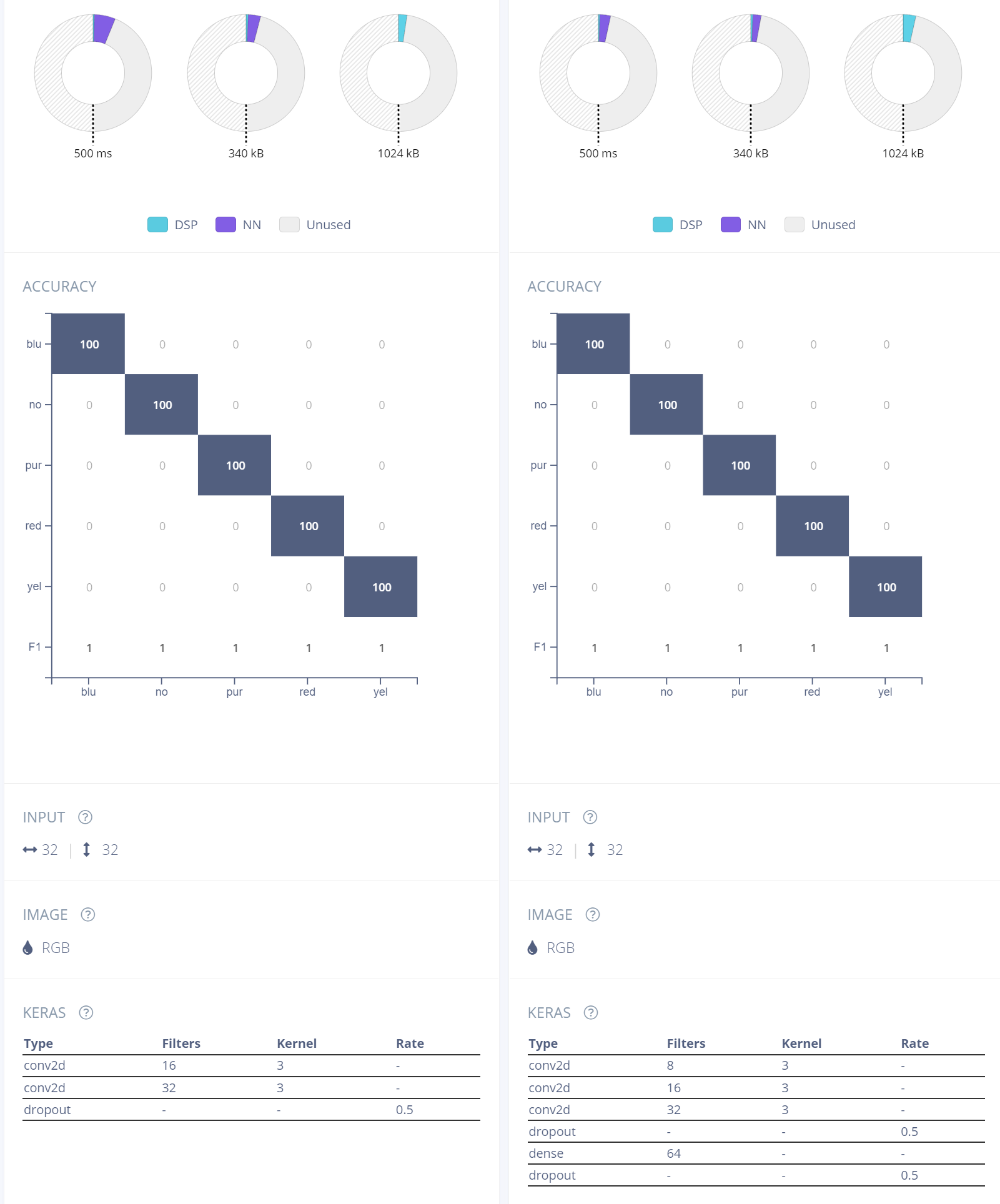

System technology/Development of quantization algorithm for accelerating deep learning inference | KIOXIA